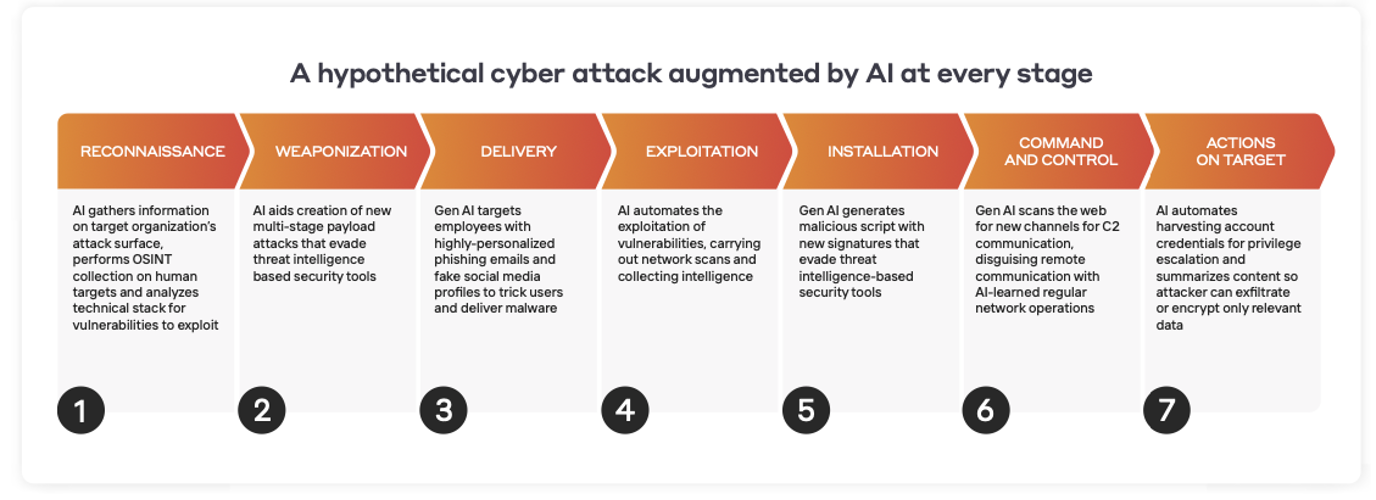

Innovations in artificial intelligence (AI) have fundamentally changed the email security landscape in recent years, but it can often be hard to determine what makes one system different to the next. In reality, under that umbrella term there exists a significant distinction in approach which may determine whether the technology provides genuine protection or simply a perceived notion of defense.

One backward-looking approach involves feeding a machine thousands of emails that have already been deemed to be malicious, and training it to look for patterns in these emails in order to spot future attacks. The second approach uses an AI system to analyze the entirety of an organization’s real-world data, enabling it to establish a notion of what is ‘normal’ and then spot subtle deviations indicative of an attack.

In the below, we compare the relative merits of each approach, with special consideration to novel attacks that leverage the latest news headlines to bypass machine learning systems trained on data sets. Training a machine on previously identified ‘known bads’ is only advantageous in certain, specific contexts that don’t change over time: to recognize the intent behind an email, for example. However, an effective email security solution must also incorporate a self-learning approach that understands ‘normal’ in the context of an organization in order to identify unusual and anomalous emails and catch even the novel attacks.

Signatures – a backward-looking approach

Over the past few decades, cyber security technologies have looked to mitigate risk by preventing previously seen attacks from occurring again. In the early days, when the lifespan of a given strain of malware or the infrastructure of an attack was in the range of months and years, this method was satisfactory. But the approach inevitably results in playing catch-up with malicious actors: it always looks to the past to guide detection for the future. With decreasing lifetimes of attacks, where a domain could be used in a single email and never seen again, this historic-looking signature-based approach is now being widely replaced by more intelligent systems.

Training a machine on ‘bad’ emails

The first AI approach we often see in the wild involves harnessing an extremely large data set with thousands or millions of emails. Once these emails have come through, an AI is trained to look for common patterns in malicious emails. The system then updates its models, rules set, and blacklists based on that data.

This method certainly represents an improvement to traditional rules and signatures, but it does not escape the fact that it is still reactive, and unable to stop new attack infrastructure and new types of email attacks. It is simply automating that flawed, traditional approach – only instead of having a human update the rules and signatures, a machine is updating them instead.

Relying on this approach alone has one basic but critical flaw: it does not enable you to stop new types of attacks that it has never seen before. It accepts that there has to be a ‘patient zero’ – or first victim – in order to succeed.

The industry is beginning to acknowledge the challenges with this approach, and huge amounts of resources – both automated systems and security researchers – are being thrown into minimizing its limitations. This includes leveraging a technique called “data augmentation” that involves taking a malicious email that slipped through and generating many “training samples” using open-source text augmentation libraries to create “similar” emails – so that the machine learns not only the missed phish as ‘bad’, but several others like it – enabling it to detect future attacks that use similar wording, and fall into the same category.

But spending all this time and effort into trying to fix an unsolvable problem is like putting all your eggs in the wrong basket. Why try and fix a flawed system rather than change the game altogether? To spell out the limitations of this approach, let us look at a situation where the nature of the attack is entirely new.

The rise of ‘fearware’

When the global pandemic hit, and governments began enforcing travel bans and imposing stringent restrictions, there was undoubtedly a collective sense of fear and uncertainty. As explained previously in this blog, cyber-criminals were quick to capitalize on this, taking advantage of people’s desire for information to send out topical emails related to COVID-19 containing malware or credential-grabbing links.

These emails often spoofed the Centers for Disease Control and Prevention (CDC), or later on, as the economic impact of the pandemic began to take hold, the Small Business Administration (SBA). As the global situation shifted, so did attackers’ tactics. And in the process, over 130,000 new domains related to COVID-19 were purchased.

Let’s now consider how the above approach to email security might fare when faced with these new email attacks. The question becomes: how can you train a model to look out for emails containing ‘COVID-19’, when the term hasn’t even been invented yet?

And while COVID-19 is the most salient example of this, the same reasoning follows for every single novel and unexpected news cycle that attackers are leveraging in their phishing emails to evade tools using this approach – and attracting the recipient’s attention as a bonus. Moreover, if an email attack is truly targeted to your organization, it might contain bespoke and tailored news referring to a very specific thing that supervised machine learning systems could never be trained on.

This isn’t to say there’s not a time and a place in email security for looking at past attacks to set yourself up for the future. It just isn’t here.

Spotting intention

Darktrace uses this approach for one specific use which is future-proof and not prone to change over time, to analyze grammar and tone in an email in order to identify intention: asking questions like ‘does this look like an attempt at inducement? Is the sender trying to solicit some sensitive information? Is this extortion?’ By training a system on an extremely large data set collected over a period of time, you can start to understand what, for instance, inducement looks like. This then enables you to easily spot future scenarios of inducement based on a common set of characteristics.

Training a system in this way works because, unlike news cycles and the topics of phishing emails, fundamental patterns in tone and language don’t change over time. An attempt at solicitation is always an attempt at solicitation, and will always bear common characteristics.

For this reason, this approach only plays one small part of a very large engine. It gives an additional indication about the nature of the threat, but is not in itself used to determine anomalous emails.

Detecting the unknown unknowns

In addition to using the above approach to identify intention, Darktrace uses unsupervised machine learning, which starts with extracting and extrapolating thousands of data points from every email. Some of these are taken directly from the email itself, while others are only ascertainable by the above intention-type analysis. Additional insights are also gained from observing emails in the wider context of all available data across email, network and the cloud environment of the organization.

Only after having a now-significantly larger and more comprehensive set of indicators, with a more complete description of that email, can the data be fed into a topic-indifferent machine learning engine to start questioning the data in millions of ways in order to understand if it belongs, given the wider context of the typical ‘pattern of life’ for the organization. Monitoring all emails in conjunction allows the machine to establish things like:

- Does this person usually receive ZIP files?

- Does this supplier usually send links to Dropbox?

- Has this sender ever logged in from China?

- Do these recipients usually get the same emails together?

The technology identifies patterns across an entire organization and gains a continuously evolving sense of ‘self’ as the organization grows and changes. It is this innate understanding of what is and isn’t ‘normal’ that allows AI to spot the truly ‘unknown unknowns’ instead of just ‘new variations of known bads.’

This type of analysis brings an additional advantage in that it is language and topic agnostic: because it focusses on anomaly detection rather than finding specific patterns that indicate threat, it is effective regardless of whether an organization typically communicates in English, Spanish, Japanese, or any other language.

By layering both of these approaches, you can understand the intention behind an email and understand whether that email belongs given the context of normal communication. And all of this is done without ever making an assumption or having the expectation that you’ve seen this threat before.

Years in the making

It’s well established now that the legacy approach to email security has failed – and this makes it easy to see why existing recommendation engines are being applied to the cyber security space. On first glance, these solutions may be appealing to a security team, but highly targeted, truly unique spear phishing emails easily skirt these systems. They can’t be relied on to stop email threats on the first encounter, as they have a dependency on known attacks with previously seen topics, domains, and payloads.

An effective, layered AI approach takes years of research and development. There is no single mathematical model to solve the problem of determining malicious emails from benign communication. A layered approach accepts that competing mathematical models each have their own strengths and weaknesses. It autonomously determines the relative weight these models should have and weighs them against one another to produce an overall ‘anomaly score’ given as a percentage, indicating exactly how unusual a particular email is in comparison to the organization’s wider email traffic flow.

It is time for email security to well and truly drop the assumption that you can look at threats of the past to predict tomorrow’s attacks. An effective AI cyber security system can identify abnormalities with no reliance on historical attacks, enabling it to catch truly unique novel emails on the first encounter – before they land in the inbox.

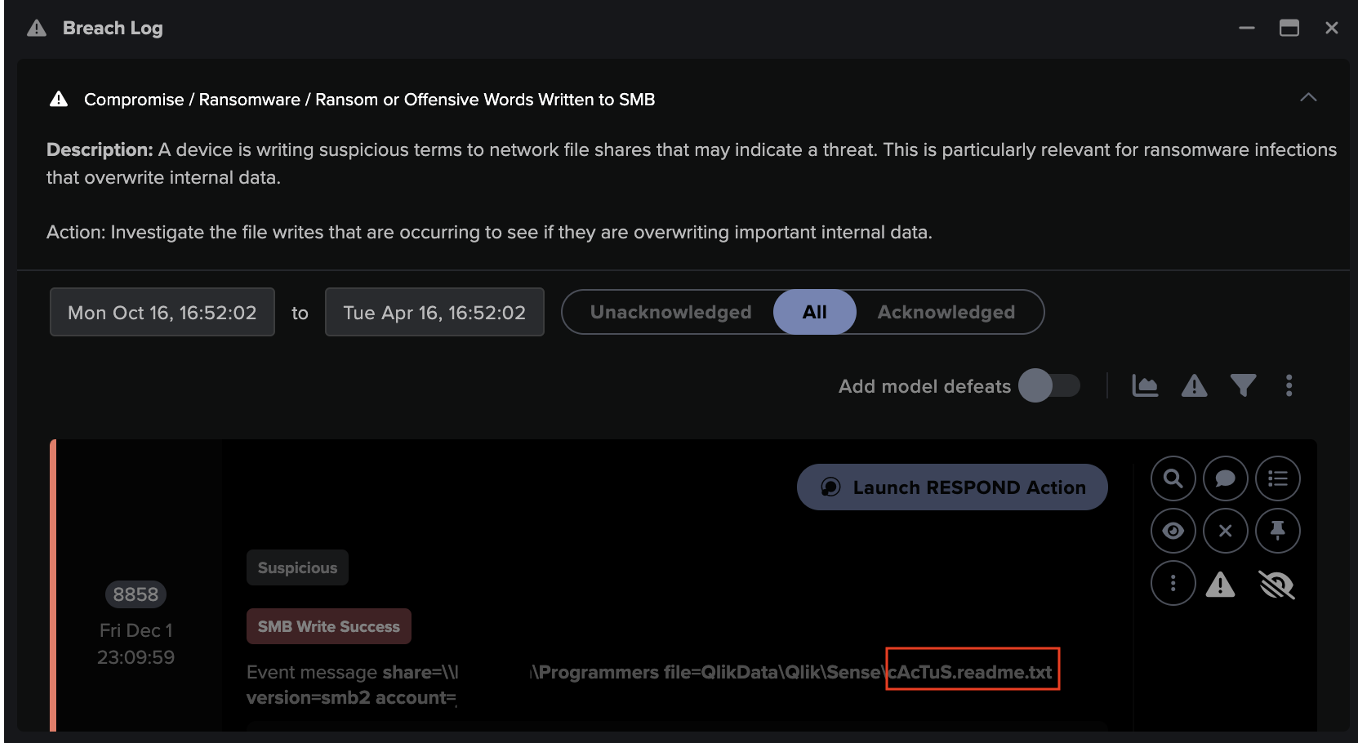

![Cyber AI Analyst Incident Log showing the offending device making over 1,000 connections to the suspicious hostname “zohoservice[.]net” over port 8383, within a specific period.](https://assets-global.website-files.com/626ff4d25aca2edf4325ff97/662971c1cf09890fd46729a1_Screenshot%202024-04-24%20at%201.55.10%20PM.png)